A distorted mirror, an Axios article, and the battle for orientation

I read an Axios article the other morning that stuck with me longer than I expected. Not because it was particularly dramatic, but because it triggered a simple question: if what the article suggests is true, do people actually realize what they are looking at?

The article argued that America may not be nearly as broken, hateful, or irreparably divided as our screens suggest. Yes, polarization exists. Anyone paying attention to politics knows that. But the piece pointed to research suggesting something subtler and perhaps more important: the perception of national division may be significantly greater than the underlying reality. In other words, the America many people experience through their screens may not be the same America they encounter in daily life. (Axios, America’s social media polarization problem, 2026).

That observation struck me because it aligns with something I have been thinking about for years in a very different context: cognitive warfare.

One of the challenges with the concept is that it often feels abstract. Analysts describe cognitive warfare as the struggle to shape perception, attention, and belief. Military strategists describe it as operations conducted in the “cognitive domain.” But for most people those phrases remain vague. They conjure images of propaganda campaigns, psychological operations, or futuristic AI manipulation.

But sometimes cognitive warfare looks much simpler.

Sometimes it looks like a distorted mirror.

Consider a few basic facts about the American information environment.Pew Research Center reports that about 53 percent of Americans sometimes get news from social media. But the platform most associated with the political conversation—X, formerly Twitter—represents a much smaller slice of the public. Only around 12 percent of U.S. adults regularly get news there. Yet the platform remains heavily used by journalists, politicians, and policy elites, which gives it an influence over the national narrative far larger than its actual user base.(Pew Research Center, Social Media and News Fact Sheet, 2024).

Yet if you spend any time around Washington, the national media ecosystem, or policy debates, you would think that X represents the entire national conversation.

It does not.

Even more striking is how concentrated the visible political conversation actually is. Pew found that a tiny group of highly active users produce the overwhelming majority of political posts. In one analysis, 10 percent of users generated 97 percent of political tweets, while the vast majority of users rarely posted about politics at all (Pew Research Center, 2019).

A relatively small number of voices can therefore dominate the national narrative.

Now combine that with the incentive structures of modern social media. Algorithms reward engagement. Engagement tends to follow emotional intensity. Content that provokes outrage, fear, moral condemnation, or tribal signaling travels farther and faster than content that is measured or nuanced.

The result is an information environment where the most visible voices are often the most extreme voices.

That does not mean those voices represent the country.

But it does mean they can shape how the country perceives itself.

This is where the Axios article becomes more interesting. Research from the organization More in Common describes what they call the “perception gap.” Americans routinely overestimate how extreme the other side is and misunderstand what their political opponents actually believe. At the same time, surveys show something paradoxical: while roughly nine in ten Americans believe the country is deeply divided, a large majority also say the differences between Americans are not so great that the country cannot still come together (More in Common, The Perception Gap, 2019).

That gap between perception and lived reality matters.

Because in the cognitive domain, perception is not merely a reflection of reality. It becomes part of the strategic terrain.

If citizens believe their society is collapsing, that belief shapes behavior. Trust erodes. Institutions appear less legitimate. Opposing groups begin to see one another less as fellow citizens and more as existential threats. The social glue that holds democratic systems together—shared legitimacy, restraint, and mutual recognition—begins to weaken.

In other words, the perception of division can become a force multiplier for division itself.

This is where cognitive warfare begins to make sense.

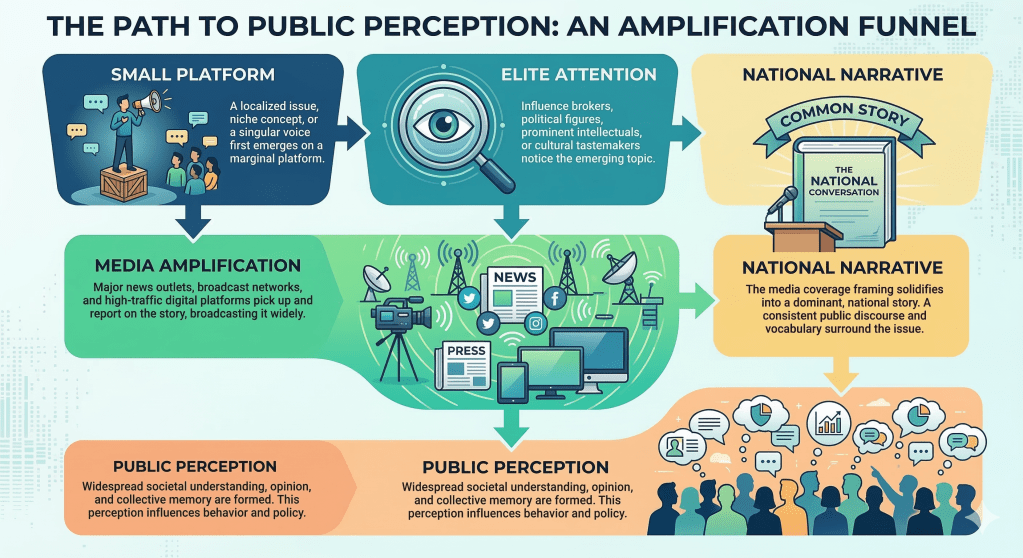

Importantly, none of this requires a central mastermind orchestrating the entire process. The architecture of the information ecosystem itself can produce these effects. Platform algorithms amplify emotional content. Media systems reward conflict narratives. Political entrepreneurs learn quickly that outrage attracts attention.

The system evolves toward volatility on its own.

But once that environment exists, it becomes extremely easy to exploit.

An adversary does not need to invent America’s divisions. It only needs to amplify them. It does not need to persuade every citizen. It only needs to increase mistrust, widen perceived distance, and convince people that their fellow citizens are far more extreme and hostile than they actually are.

The objective is not persuasion.

The objective is disorientation.

This is where the ideas of Air Force strategist John Boyd become especially relevant. Boyd’s famous OODA loop—observe, orient, decide, act—is often reduced to the idea of moving faster than an opponent. But Boyd believed the most important part of the cycle was orientation. Orientation is the lens through which individuals and societies interpret reality. It is shaped by culture, experience, analysis, and incoming information.

If orientation becomes distorted, the rest of the decision cycle begins to break down.

Boyd argued that effective strategy often involves generating uncertainty, confusion, mistrust, and disorder within an opponent’s system. When those conditions emerge, the opponent’s ability to interpret events and make coherent decisions begins to collapse.

Seen through that lens, cognitive warfare is fundamentally a struggle over orientation.

If I can distort how you perceive the world—if I can convince you that your society is more divided, weaker, or more unstable than it actually is—then I can influence how you act without firing a shot.

And that brings us back to the original Axios article.

What made it interesting was not simply its observation about polarization. It was the implication that Americans may sometimes be experiencing a magnified version of their own society, one shaped by algorithmic amplification, concentrated voices, and the emotional economics of modern media.

The nation we see online is not always the nation we live in.

But if enough people believe that distorted picture, the distortion itself begins to shape political reality.

That is what cognitive warfare looks like in practice.

Not always propaganda campaigns or psychological operations. Sometimes it appears as an endless feed of outrage, a handful of amplified voices, and a growing belief that the country has become something darker and more fractured than it actually is.

The battlefield, in other words, may not begin overseas.

Sometimes it begins in the mirror.